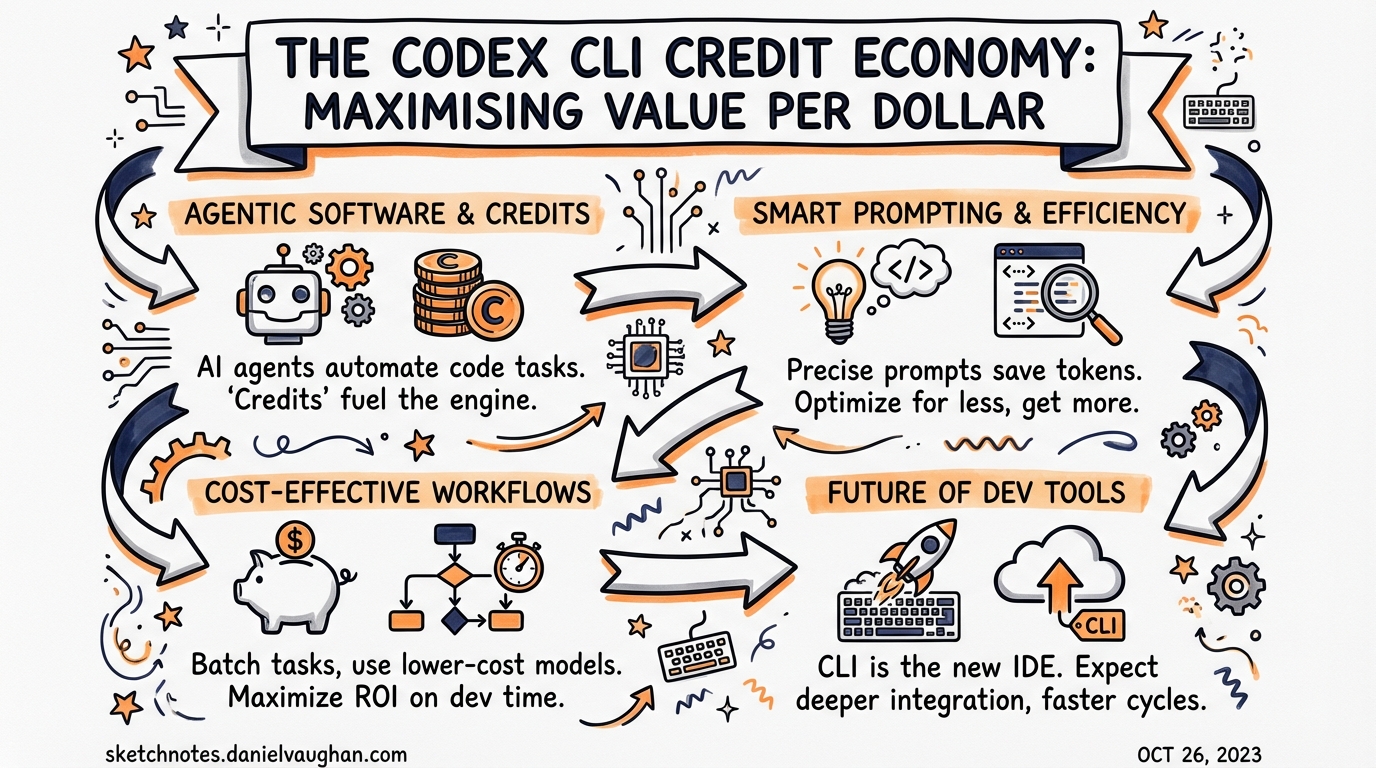

The Codex CLI Credit Economy: Maximising Value Per Dollar

The Codex CLI Credit Economy: Maximising Value Per Dollar

Cost conversations in the Codex community tend to cluster at two extremes: people who are surprised their credits ran out after two hours, and people who are running expensive models on tasks that would have been fine on the cheapest option. This article addresses both. It covers the subscription tiers, the model routing decision matrix including the Spark model, the 4× token efficiency advantage over Claude Code, and how to construct a break-even analysis for your team size.

This article complements Codex CLI Cost Management: Token Strategy, Model Routing and Quota Control which covers the mechanics of

max_tokens_per_session, profile configuration, andpostTaskCompletehook patterns in detail.

The Credit Landscape in 2026

Codex credits are consumed at a rate that depends on three factors: model tier, task complexity, and execution context (local CLI vs cloud task)1.

graph LR

A[Task submitted] --> B{Execution context?}

B -->|Local CLI| C[Lower credit rate]

B -->|Cloud task| D[Higher credit rate]

C --> E{Model tier?}

D --> E

E -->|gpt-5.4-mini| F[~0.2× base cost]

E -->|gpt-5.4| G[1× base cost]

E -->|gpt-5.3-codex-spark| H[Separate limit pool]

E -->|xhigh reasoning| I[~5× base cost]

Local tasks — running codex in your terminal against a local codebase — consume fewer credits than cloud tasks. Automated PR reviews triggered from GitHub, Slack, or Linear route through OpenAI’s cloud infrastructure and cost more2.

The Mini tier (gpt-5.4-mini) is not available for cloud tasks at all. If you are budget-sensitive and running primarily local terminal work, Mini gives you significantly more output per credit than the flagship model.

Subscription Tier Break-Even Analysis

Understanding whether a subscription pays for itself versus API-key billing requires an honest account of usage intensity:

| Tier | Monthly cost | Best for | API equivalent break-even |

|---|---|---|---|

| ChatGPT Plus | $20/user | Individuals, exploratory use | ~50–80 hours/month intensive coding3 |

| ChatGPT Team | $25–30/user | Small teams (3–15 devs) | ~60–90 hours/user/month |

| ChatGPT Pro | $200/user | Power users, agentic workflows | ~200+ hours/month or heavy reasoning model use |

| API key mode | Pay-per-token | CI/CD pipelines, automation | N/A — predictable cost at scale |

| Enterprise | Custom pricing | 50+ devs, compliance requirements | Custom |

The break-even calculation for Pro vs API key hinges on reasoning model usage. If you regularly reach for o3-class models — roughly 5× the token cost of standard models — a Pro subscription’s flat-rate access can be significantly cheaper than equivalent API billing at that model tier3.

When API Key Mode Wins

API key mode is the right choice when:

- CI/CD pipelines where usage is predictable and you need billing separation per team/repo

- Subagent workers on

gpt-5.4-mini— the cheapest path when you can instrument cost exactly - Enterprise multi-project billing where chargeback per team is required

The trap to avoid: using API key mode without max_tokens_per_session configured. A single runaway session debugging a large legacy codebase can consume 500K+ tokens — roughly $8–15 in one sitting4.

The 4× Token Efficiency Advantage

OpenAI-cited benchmarks and independent developer testing consistently show Codex CLI uses approximately 4× fewer tokens than Claude Code for equivalent coding tasks5.

The raw numbers from a Figma-to-code cloning benchmark:

- Claude Code: ~6.2 million tokens

- Codex CLI: ~1.5 million tokens

For a focused TypeScript task:

- Claude Code: 234,772 tokens

- Codex CLI: 72,579 tokens6

This gap has concrete financial implications. At API rates, the effective cost of a task through Claude Code can be four times higher even before accounting for Claude’s higher per-token price5.

graph TD

subgraph "Same task, different tools"

A["Task: Implement feature X"]

A --> B["Claude Code\n~235K tokens\nMore exploratory\nMore explanatory"]

A --> C["Codex CLI\n~73K tokens\nFocused execution\nMinimal commentary"]

end

B --> D["~$6.50 at Claude Sonnet API rates"]

C --> E["~$0.15 at gpt-5.4-mini rates\n~$0.37 at gpt-5.4 rates"]

Why Claude Uses More Tokens

Claude Code’s higher token consumption is not waste — it reflects a different philosophy: Claude reasons aloud, asks clarifying questions, and provides detailed explanations. This is valuable when exploring architecture or debugging complex issues. It is expensive when you already know what you want done6.

The practical implication: use each tool where its communication style adds value. Claude Code for initial design and complex reasoning; Codex CLI for execution, refactoring, and the bulk of implementation work.

The Spark Model: Turbo Worker Economics

GPT-5.3-Codex-Spark runs on Cerebras WSE-3 hardware at 1,000+ tokens per second — a 15× speed improvement over GPT-5-Codex7. During the current research preview period, Spark usage has separate model-specific limits and does not count against your standard Codex quota8.

This makes Spark an unusual opportunity: during the preview, it is effectively free from your normal credit budget.

Credits-Per-Task Routing Matrix

graph TD

A[Incoming task] --> B{Task type?}

B -->|File search / navigation| C[gpt-5.4-mini]

B -->|Test generation, docs| C

B -->|Draft code, exploratory refactoring| D{Speed priority?}

D -->|Fastest iteration| E[gpt-5.3-codex-spark]

D -->|Standard| C

B -->|Complex multi-file refactoring| F[gpt-5.4]

B -->|Bug diagnosis in large codebase| F

B -->|Security audit| G[o4-mini or o3]

B -->|Architecture design| G

B -->|Novel algorithm| G

Approximate relative credit cost by task type:

| Task type | Recommended model | Relative cost |

|---|---|---|

| File navigation, search | gpt-5.4-mini |

0.2× |

| Test scaffolding | gpt-5.4-mini |

0.2× |

| Documentation generation | gpt-5.4-mini |

0.2× |

| Draft implementation | gpt-5.3-codex-spark* |

~0× (separate pool) |

| Iterative refinement | gpt-5.3-codex-spark* |

~0× (separate pool) |

| Multi-file refactoring | gpt-5.4 |

1× |

| Complex debugging | gpt-5.4 |

1× |

| Security audit | o4-mini |

~3× |

| Novel algorithm design | o3 |

~15× |

*Research preview, Pro tier only, separate limits8

The 5-Hour Window: Managing Burst Consumption

ChatGPT subscription Codex limits reset on a rolling 5-hour window, not a monthly cycle1. This has practical implications for agentic workflows:

A session that runs multiple parallel subagents will consume credits faster than sequential work. If you hit your 5-hour window limit mid-way through a large task, Codex pauses — and resumes when the window resets, not when the monthly cycle resets.

Strategies for window management:

- Profile-based model routing — default profile uses

gpt-5.4-mini, explicit invocation required forgpt-5.49 - Context compaction before subagent spawns — running

/compactbefore spawning workers reduces per-subagent context overhead - Stagger spawning on long jobs — rather than spawning 8 subagents simultaneously, stagger by 2–3 turns to avoid simultaneous large context initialisation

- Spark for draft-then-review cycles — use Spark’s separate pool for rapid first-draft generation, then one

gpt-5.4call for review and correction

Subscription Upgrade Decision Framework

graph TD

A[Start: what is my current plan?]

A --> B{Plus - $20/mo}

A --> C{Pro - $200/mo}

B --> D{Hitting 5hr window limit regularly?}

D -->|Yes, 3+ times/week| E{Primary use?}

D -->|No| F[Stay on Plus]

E -->|Agentic multi-file work| G[Upgrade to Pro]

E -->|Team automation| H[Consider Team tier]

C --> I{Using reasoning models heavily?}

I -->|Yes, daily o3 use| J[Pro pays for itself]

I -->|No| K{Monthly token spend > $200?}

K -->|Yes via API| L[Stay on Pro or API + limits]

K -->|No| M[Consider downgrade to Plus]

The key insight: Pro’s value is front-loaded towards heavy reasoning model users and teams hitting the 5-hour window daily. For developers using primarily gpt-5.4 and gpt-5.4-mini on exploratory personal projects, Plus is typically sufficient3.

Practical Tips for Maximising Credits

These recommendations come from the Codex community and official documentation10:

Reduce prompt overhead:

- Trim your AGENTS.md to the essentials — every byte adds to context on every API call

- Disable unused MCP servers; each active server adds schema tokens to every session

- Use nested AGENTS.md files to scope context to the directory being worked on

Model hygiene:

- Set

default_profile = "explore"where the explore profile usesgpt-5.4-mini; require explicit--profile commitforgpt-5.4invocations - Use

max_tokens_per_sessionas a per-task ceiling, not just a safety net — treat it like a budget per task type

Session discipline:

- Run

/compactproactively at ~60% context fill; forced compaction wastes more context than manual - Subagent delegation naturally limits main-session context growth — use it for file-heavy operations

Monitor before you optimise:

- Install

ccusageand run it weekly to identify which sessions are your biggest cost drivers before making configuration changes11

Key Numbers

- Codex CLI uses ~4× fewer tokens than Claude Code for equivalent tasks5

- GPT-5.3-Codex-Spark: separate credit pool during research preview — currently free from standard quota8

- Break-even for Plus ($20) vs API key: ~50–80 hours/month of intensive coding3

- Break-even for Pro ($200) vs API key: primarily justified by daily reasoning model (o3/o4-mini) usage

- Local tasks: consistently cheaper than cloud tasks (PR review, Slack/Linear triggers)2

- A

gpt-5.4session in API mode consuming 500K tokens: approximately $8–15 withoutmax_tokens_per_sessionguardrails4

Citations

-

OpenAI Codex Pricing — Developers — official pricing page with credit rate card and local vs cloud task distinction ↩ ↩2

-

OpenAI Codex Features — Developers — local vs cloud task execution and credit consumption ↩ ↩2

-

OpenAI Codex Pricing 2026: API Costs, Token Limits — break-even analysis across subscription tiers ↩ ↩2 ↩3 ↩4

-

Token usage spikes too quickly in Codex CLI sessions — GitHub Issue #6113 — community-reported runaway session costs ↩ ↩2

-

Codex vs Claude Code (2026): Benchmarks, Agent Teams & Limits Compared — MorphLLM — 4× token efficiency benchmark comparison ↩ ↩2 ↩3

-

Claude Code vs Codex CLI 2026 — NxCode — token count comparison on identical TypeScript tasks ↩ ↩2

-

Introducing GPT-5.3-Codex-Spark — OpenAI — Spark model announcement, Cerebras WSE-3, 1,000+ tokens/sec ↩

-

Models — Codex Developers — Spark separate limit pool during research preview ↩ ↩2 ↩3

-

Reasoning Effort Tuning: Minimal to xhigh — profile-based model routing configuration ↩

-

OpenAI Codex Pricing 2026 — UI Bakery Blog — official tips for maximising usage limits ↩

-

Codex CLI Overview — ccusage — ccusage tool for per-session token cost analysis ↩