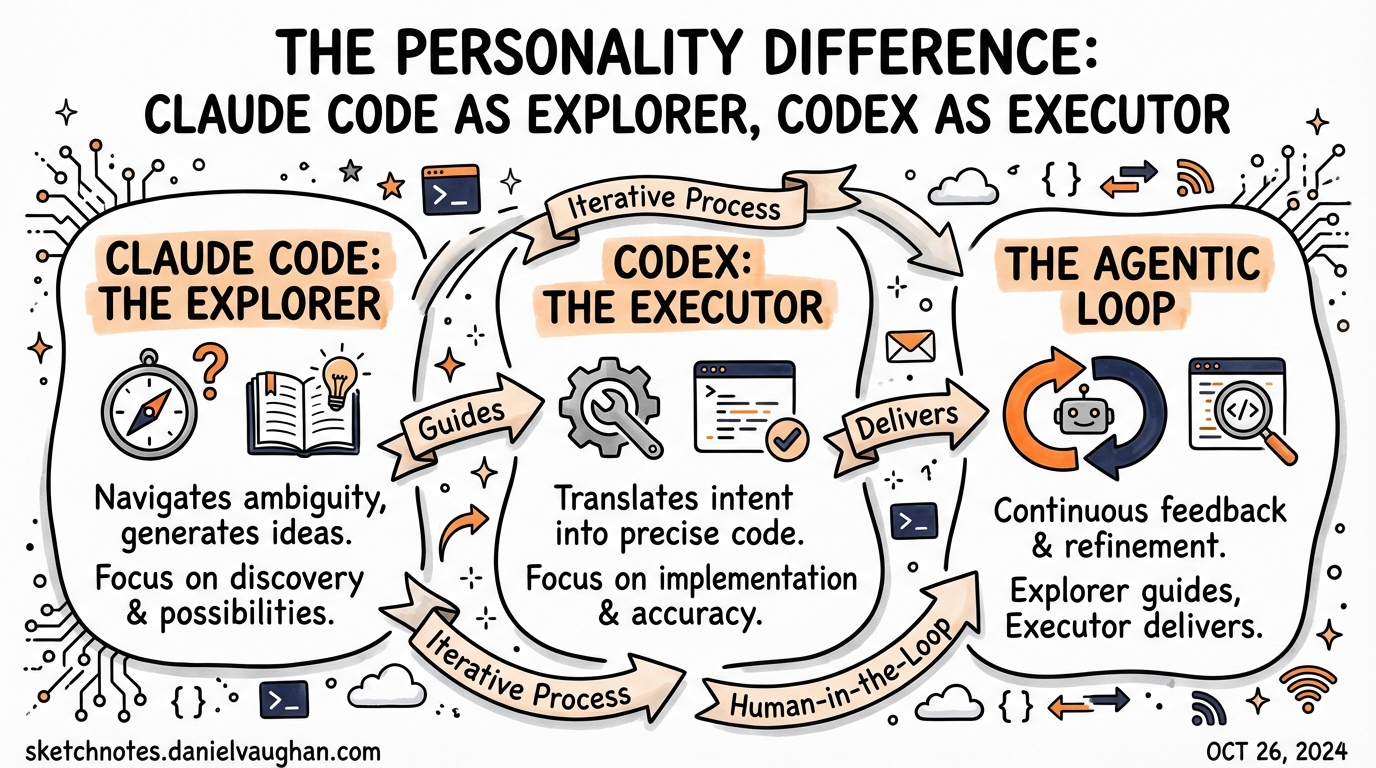

The Personality Difference: Claude Code as Explorer, Codex as Executor

Published 2026-03-27. Sources: YouTube transcripts 97FYys-kj58 (weeks-long Claude Code vs Codex comparison), h-RT03B14SM (same prompts side-by-side build), 4qIRAtw4Ktg (Codex app first impressions). Daniel’s perspective.

The Wrong Question

Most comparisons between Claude Code and Codex CLI try to find a winner. Benchmarks, speed tests, cost per token — framed as a horse race where one must lose. That framing produces bad decisions.

Both tools score in the high-70s on SWE-bench Verified. Both run on comparable token economics at the $20 plan. Both have IDE extensions, cloud modes, and active development teams. In the metrics that used to separate tools, they are essentially tied.

The useful question is different: which one thinks like you?

Ergonomics: The First Signal

Before the personality difference becomes obvious, you notice it in output style.

Ask Codex to describe a codebase: you get three precise sentences. The right folders. The key entry points. Done.

Ask Claude Code the same question: you get an architecture overview, key features, the technical stack, a readability score, design decisions, and a suggestion for what to explore next.

Neither is wrong. But one feels like talking to a senior engineer who values your time. The other feels like collaborating with someone who finds your problem genuinely interesting.

Codex is the engineer. Claude is the collaborator.

This shows up at scale: after a 10-minute build task, Codex returns a short paragraph — what it built, what it tested, suggested next steps. Claude returns four pages of documentation that reads like a handoff memo. Codex practitioners sometimes have to ask for more detail. Claude practitioners sometimes have to ask it to get to the point.

The Personality Difference

The real distinction — and this is the one that matters for choosing your tool — is how each agent handles scope.

Claude: The Explorer

Claude will wander. Not randomly — purposefully, creatively. If you ask it to update a dropdown component, there’s a meaningful chance it will also update the buttons that share the same styling, “so they’ll be similar.” You didn’t ask for that. But maybe you should have.

This is Claude Code as a puppy with a ball: it will absolutely fetch the ball. And it might also bring back a second ball it noticed along the way, because the second ball looked relevant.

In exploratory phases — when you’re ideating a new feature, when you want systemic changes to propagate, when you’d benefit from the agent noticing adjacent problems — this is exactly the behaviour you want. Claude sees your intent, not just your words.

The failure mode: Claude sometimes loses the thread. In a long debug session where the root cause is deep (a theming substrate issue, for example), Claude may convince itself the problem is solved. It will pattern-match the change it made to the symptom you described and say: done. When you show it a screenshot proving otherwise, it may try to explain the screenshot away. “That’s probably just a flash while the theme is transitioning.”

Claude Code is the agent most likely to gaslight you with good intentions.

Codex: The Executor

Codex sticks to the task. Precisely. When asked to fix a bug, it will:

- Fix exactly that bug

- Run the tests (even if you forgot to ask)

- Check lint (even if you forgot to ask)

- Notice if upstream constants are now orphaned

- Note downstream impact areas worth checking

- Return a short summary and stop

It will not change the buttons to match the dropdown you updated, even though a reasonable engineer might. You’ll have to come back and ask. But what it does do, it does completely. It almost never forgets a constraint you stated at the beginning of the session.

This is Codex as a disciplined engineer: it solves the problem holistically from beginning to end, validates its own work, and doesn’t improvise unless asked.

The concrete example: the same dark mode/light mode theming bug that consumed hours with Claude Code — Codex solved in 20 minutes. It wrote Playwright tests, ran them, read the failures, traced the root cause through the logs, fixed it, verified. No check-ins. Came back with one paragraph: “I noticed this was the problem, so I fixed that.”

When to Use Each

This isn’t “Claude is better” or “Codex is better.” It’s a workflow choice.

| Situation | Use |

|---|---|

| Exploring a new area of the codebase | Claude Code |

| Ideating a UI component or feature | Claude Code |

| Propagating a systemic change (e.g., rename a concept everywhere it appears) | Claude Code |

| Fixing a specific, well-defined bug end-to-end | Codex |

| Writing a complete feature with tests from a spec | Codex |

| Backend rigor: API contracts, migration scripts, validation | Codex |

| You need disciplined test coverage without reminders | Codex |

| Architectural audit / “what’s wrong here?” | Claude Code |

| Long parallel workstreams (agentmaxxing) | Codex (lower interruption rate) |

The Model Underneath Matters Too

Codex has explicit personality modes: Pragmatic (default) and Friendly. This is not cosmetic. Community reports confirm that Pragmatic mode introduces more errors — failed dependency installs, broken UI elements, bad command handling. Switching to Friendly mode for implementation tasks resolves many of these issues in practice.

Claude doesn’t have named personalities, but its default mode is exploratory-collaborative. You don’t adjust it the same way.

The Bigger Picture: What Kind of Engineer Are You?

Both systems push your work toward “reviewer” rather than “writer.” But the flavour of reviewing differs.

With Claude, you’re reviewing creative output. You’re asking: did it understand my intent? Did it over-extend? Is the ancillary work it did actually useful?

With Codex, you’re reviewing disciplined execution. You’re asking: did it miss anything I didn’t explicitly state? Is the scope right?

If your style is exploratory — you think in systems, you trust agents to find adjacent issues — Claude Code is closer to your mental model.

If your style is rigorous — you write specs before code, you want agents that don’t improvise — Codex is closer to your mental model.

The practitioners who are most effective use both, and they know which one to reach for when.

Practical Takeaway

- Start a feature → Claude Code (explore the problem space)

- Implement the feature → Codex (execute the spec)

- Debug a specific failure → Codex (stay on task, run tests, don’t wander)

- Refactor a whole pattern → Claude Code (let it see the system)

- Parallel sprint workload → Codex (lower noise, higher task-completion rate)

Don’t pick a favourite. Build a toolkit.