Toxic Flow: The Addictive, Exhausting Reality of Multi-Agent Coding

Toxic Flow: The Addictive, Exhausting Reality of Multi-Agent Coding

You know the feeling. Four agents are running. One is refactoring the API layer, another is writing tests, a third is updating documentation, and a fourth is linting the generated output. Your terminal is alive. Diffs are streaming. Approval prompts are stacking up. You’re clicking, scanning, approving, context-switching, and somewhere beneath the adrenaline you notice: your jaw is clenched, your shoulders are at your ears, and you haven’t blinked in ninety seconds.

You’re in flow. But something is wrong with this flow.

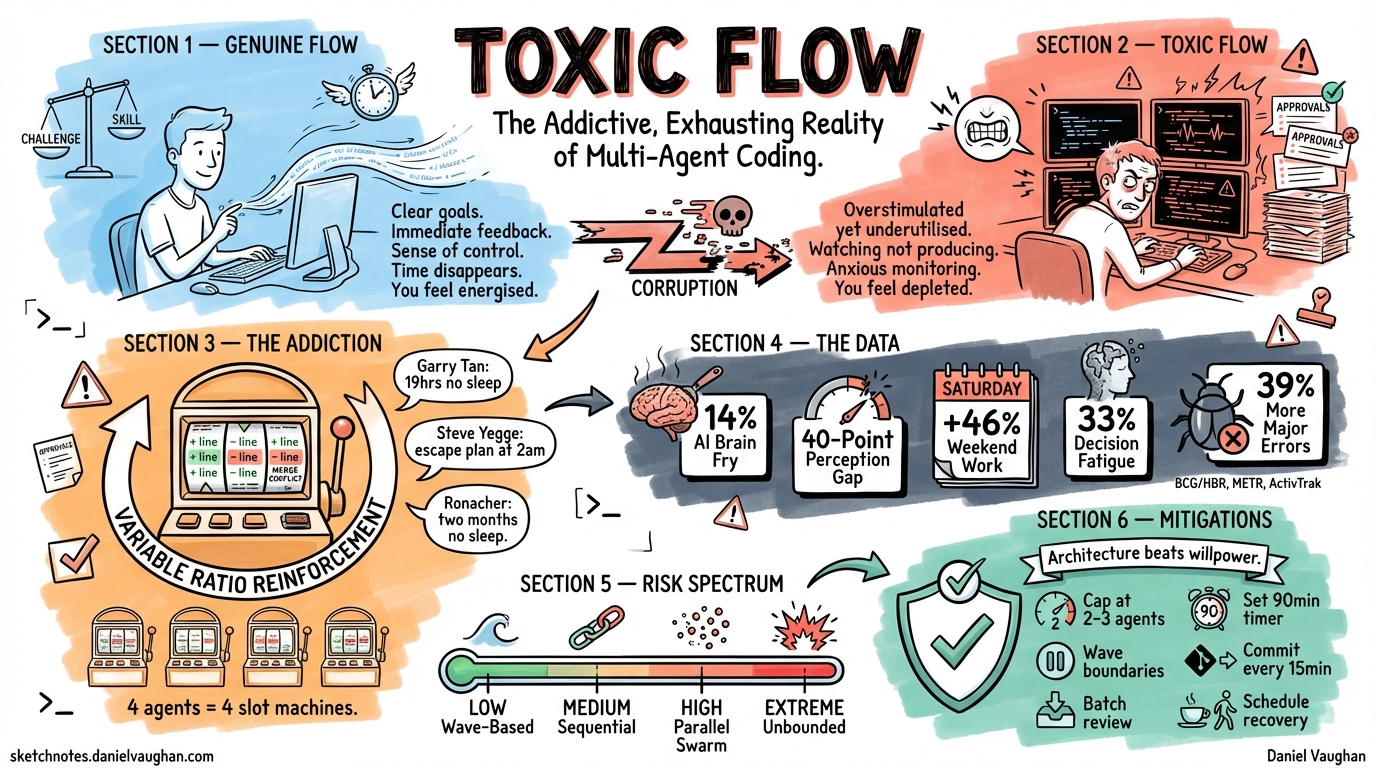

This article names a phenomenon that thousands of developers are experiencing but that nobody has precisely described: toxic flow — an addictive, cognitively punishing variant of the flow state that emerges specifically when developers work with multiple AI coding agents simultaneously. It looks like peak productivity. It feels like running a marathon at sprint pace. And it is quietly burning people out.

What Flow Is Supposed to Feel Like

Mihaly Csikszentmihalyi’s original flow research (1990) describes a state with clear characteristics: clear goals, immediate feedback, a balance between challenge and skill, a sense of control, and the merging of action and awareness.1 Time distorts. Self-consciousness disappears. The work feels intrinsically rewarding.

Developers know this state intimately. You’re deep in a problem, the code is flowing from your fingers, tests are passing, and three hours vanish in what feels like twenty minutes. When you surface, you feel energised rather than depleted. That’s flow. It’s one of the best experiences in professional life.

What Toxic Flow Actually Feels Like

Toxic flow shares flow’s absorption and time distortion but inverts almost everything else.

In genuine flow, you are the one producing. In toxic flow, you are watching production happen and trying to keep up with it. The challenge-skill balance is broken: the challenge of tracking four agents exceeds any individual’s monitoring bandwidth, but the tasks are too easy to abandon. You’re simultaneously overstimulated and underutilised — a cognitive state that psychologists associate with anxiety, not engagement.

The immediate feedback that characterises genuine flow becomes too immediate in toxic flow. Every few seconds, a new diff appears, a new approval prompt demands attention, a new agent output needs review. There is no natural pause, no moment where the system waits for you. You wait for it exactly never.

Here’s what developers actually report:

“It’s now 11:47am and I am mentally exhausted. I feel like my dog after she spends an hour at her sniff-training class.” — Simon Willison, running 3 coding agents while attending meetings2

“After 4 hours of vibe coding I feel as tired as a full day of manual coding.” — Hacker News user, “Vibe coding creates fatigue?” thread2

“Each execution prompt after a long planning session feels like opening a lootbox when I used to play Counter Strike… I had to actively force myself to leave home because I was getting consumed by it in the weekend.” — gchamonlive, Hacker News3

These are not descriptions of joyful flow. They are descriptions of compulsion masquerading as productivity.

The Addiction Mechanism

The gambling parallel is not a metaphor. It appears independently across at least six unrelated sources — developers, psychologists, tech journalists, and researchers all reaching for the same comparison without coordinating.

Quentin Rousseau, co-founder of Rootly, identified the mechanism precisely: variable ratio reinforcement — the same psychological pattern that makes slot machines the most addictive form of gambling.4 You type a prompt. Sometimes the agent produces something brilliant. Sometimes it produces garbage. The unpredictability is the hook. You cannot predict which prompt will yield the dopamine hit, so you keep prompting.

The multi-agent variant amplifies this. With four agents running, you are playing four slot machines simultaneously. The probability that at least one agent produces something exciting in any given minute approaches certainty. The reward signal never stops.

Armin Ronacher, creator of Flask and one of Python’s most respected engineers, described it with uncomfortable honesty: “When Peter first got me hooked on Claude, I did not sleep. I spent two months excessively prompting the thing.”5

Garry Tan, CEO of Y Combinator: “So addicted to Claude Code, I stayed up 19 hours yesterday and didn’t sleep till 5 AM.” In a later interview: “I sleep, like, four hours a night right now… I have cyber psychosis.”6

Steve Yegge, the engineer behind “Vibe Coding,” described running “a practiced escape plan every night to get my computer closed by 2am,” involving physically leaving the room and covering his ears while sprinting away.7

These are not junior developers losing perspective. These are senior engineers and CEOs — people with decades of experience managing their own cognition — who cannot stop.

The Multi-Agent Dimension: Where Toxic Flow Gets Specific

Everything above applies to single-agent work. But multi-agent orchestration introduces a qualitatively different cognitive challenge that goes beyond “more of the same.”

When you run one agent, you are the producer being assisted. When you run four agents, you become a manager — and specifically, the worst kind of manager: one who must simultaneously review the output of four workers producing at superhuman speed, with no ability to slow them down, no natural checkpoints, and an approval system that rewards speed over scrutiny.

The specific cognitive loads of multi-agent toxic flow:

The tracking tax. Each agent has its own context, its own state, its own potential failure modes. At any moment, you need to know: which agent is making progress? Which is stuck in a loop? Which has drifted off-task? Which approval prompt is urgent (it’s about to write to production) versus routine (it’s asking to create a test file)? This is air-traffic-control-level monitoring with none of the training, tooling, or rest requirements.

Approval fatigue. The first five approval prompts get careful review. By the twentieth, you’re skimming. By the fiftieth, you’re rubber-stamping. A developer on an AI tool aggregation site described it bluntly: “Diffs were coming fast and furious with multiple file tabs opening, being unsure where to click to approve changes, and finding it easier to just keep clicking apply all.”8 This is not carelessness. It is a predictable cognitive response to sustained high-frequency decision demands.

The anxiety gap. Between prompts, there is a gap where agents are working and you are waiting. This gap is too short to start meaningful work and too long to simply watch. Developers fill it by checking Hacker News, scrolling Twitter, or starting another agent — each of which fragments attention further. One Hacker News commenter described the feeling precisely: “Instead of developing, I’m code reviewing. Hard to get into a flow state when Claude is the one flowing, not me.”9

The illusion of control. You set the prompts. You chose the orchestration pattern. You configured the sandbox. So it feels like you are in control. But you are not — you are reacting to machine-speed output with human-speed cognition. As one developer put it in Tabula Magazine: “Living by machine time is what I sometimes feel… it feels like the machine is in control, not me.”10

The Data: This Is Not Anecdotal

The Boston Consulting Group and Harvard Business Review published a study of 1,488 full-time US workers in March 2026 that gives toxic flow a quantitative backbone:11

- 14% of AI-using workers report what BCG calls “AI brain fry” — mental fatigue from excessive AI oversight

- Workers with high AI oversight experience 14% more mental effort, 12% increased mental fatigue, and 19% more information overload

- Decision fatigue increases 33% among affected workers

- Minor errors increase 11%; major errors increase 39%

- Workers using 4+ AI tools see productivity actually decline — the sweet spot is 1-2 tools

- Intent to quit rises to 34% among those with AI brain fry, versus 25% baseline — a 39% increase in attrition risk

A senior engineering manager in the study described it perfectly: “It was like I had a dozen browser tabs open in my head, all fighting for attention.”

The working-hours data tells the same story from a different angle. ActivTrak’s analysis of 443 million hours of work data across 163,638 employees found that Saturday productive hours jumped 46% and Sunday productive hours rose 58% after AI tool adoption. AI tool time increased eightfold. Weekend work increased over 40% overall.12

Developers are not working less with AI tools. They are working more, at higher cognitive intensity, with less recovery time.

The Perception Gap: Feeling Fast While Going Slow

Perhaps the most disturbing finding in the research is the gap between perceived and actual productivity.

The METR study (July 2025) gave 16 experienced open-source developers access to Cursor Pro with Claude 3.5/3.7 Sonnet and measured their performance on real tasks in their own repositories. The developers predicted they would be 24% faster with AI. They self-reported afterwards that they believed AI made them roughly 20% faster. The actual measured result: they were 19% slower.13

That is a 40-point perception gap. Developers felt significantly faster while actually being significantly slower. The AI output volume — the raw quantity of code produced — created a sensation of productivity that the actual task completion time did not support.

In multi-agent workflows, this perception gap is likely even larger. When four agents are producing output simultaneously, the volume of visible work is enormous. Hundreds of lines of code appearing every minute. Files being created, tests being written, documentation being updated. It looks spectacularly productive. But if the developer’s review bandwidth is saturated — if they are approving without reading, missing subtle bugs, accumulating technical debt that will take days to unwind — the net productivity may be negative.

An O’Reilly Radar article captured the collapse point vividly: a developer created 17 dashboard visualisations in three hours of agent-assisted flow, then made one more request — “add colour-blind accessibility” — and the AI restructured the entire codebase, breaking everything. Three hours of work vanished because the developer never committed, never paused, never created a checkpoint. They were flowing too fast to build safety nets.14

Dark Flow: The Psychological Framework

The academic term closest to what I’m calling toxic flow is dark flow, which comes from gambling addiction research. Dixon et al. (2017) defined dark flow as a corrupted version of genuine flow — an absorbed, engaged state that produces addictive reactions without actual productivity or growth.15

Csikszentmihalyi himself anticipated this problem. He called it junk flow: “when you are actually becoming addicted to a superficial experience that may be flow at the beginning, but after a while becomes something that you become addicted to instead of something that makes you grow.”1

Jeremy Howard of fast.ai drew the connection explicitly in his January 2026 essay “Breaking the Spell of Vibe Coding,” identifying three parallels between slot machine dark flow and agentic coding:16

- Misleading performance signals. Slot machines use “Loss Disguised as a Win” — celebratory feedback for actual losses. AI agents use polished, well-formatted output that looks correct, triggering less scrutiny than messy human code even when it contains critical bugs.

- Distorted skill-challenge balance. Genuine flow requires appropriate skill-challenge matching. AI obscures this by letting you attempt tasks far beyond your ability to review, creating false agency.

- Unreliable self-assessment. The METR 40-point perception gap mirrors how gambling addicts misjudge their performance.

“Both slot machines and LLMs are explicitly engineered to maximise your psychological reaction,” Howard wrote. That statement may be provocative, but the behavioural evidence supports it.

Why “Toxic Flow” Is the Right Name

Several terms are already in circulation: dark flow, junk flow, agent psychosis, cyber psychosis, AI brain fry. None of them captures exactly what multi-agent developers experience.

Dark flow is academic jargon from gambling research. Most developers will never encounter it. Agent psychosis and cyber psychosis are dramatic and imprecise — they suggest something has gone pathologically wrong, when the actual experience is more subtle: a gradual cognitive degradation masked by the sensation of productivity. AI brain fry is BCG’s corporate terminology — accurate but clinical, and it doesn’t distinguish the flow-state dimension from ordinary fatigue.

Toxic flow communicates the essential truth in two words: it is flow, and it is harming you.

The “toxic” qualifier does three things that the other terms don’t:

- It acknowledges the genuine flow component. This is not ordinary fatigue. The absorption, time distortion, and intrinsic motivation are real. That’s what makes it dangerous — it does not feel like something you should stop.

- It signals that the harm is cumulative rather than acute. A toxic substance doesn’t kill you immediately; it accumulates. Toxic flow doesn’t crash you in one session; it erodes your review quality, your sleep, your ability to code without agent assistance, and eventually your relationship with the craft.

- It connects to a vocabulary developers already understand. “Toxic” as a qualifier (toxic culture, toxic positivity, toxic productivity) is established shorthand for “this thing that looks positive is actually causing harm.”

The Multi-Agent Toxic Flow Spectrum

Not all multi-agent work produces toxic flow. The risk depends on how the orchestration is structured:

Low risk: Wave-Based Hybrid with explicit checkpoints. Agents work in waves. Between waves, everything stops. The developer reviews completed work, commits, and decides whether to proceed. The wave boundary is a natural circuit breaker that forces pause and reflection. (See Chapter 18 of “Codex CLI: Agentic Engineering from First Principles” for the pattern.)

Medium risk: Sequential Gated Chain. Agents work one at a time. The developer reviews each output before triggering the next stage. Cognitive load is manageable but sustained attention is required for the full pipeline duration.

High risk: Parallel Worker Swarm with real-time monitoring. Multiple agents work simultaneously. The developer watches all of them, approving and correcting as outputs arrive. This is the architecture most likely to produce toxic flow: high stimulus rate, no natural pauses, and the monitoring-without-producing role that creates the tracking tax.

Extreme risk: Unbounded parallelism without an aggregation plan. Agents spawned without a concurrency cap, no predefined completion criteria, and results reviewed in real-time rather than in batch. This is the multi-agent equivalent of playing an MMO without a logout timer.

Warning Signs

You are in toxic flow when:

- You are approving diffs without reading them fully — not because you trust the agent, but because you can’t keep up

- You cannot articulate what agent 3 is currently working on without checking the terminal

- You feel anxious during the gaps between agent outputs rather than using them to think

- You are starting new agents to fill the anxiety gap rather than because new work is needed

- You have been at the terminal for more than two hours without committing, pushing, or taking a break

- You feel the session is “almost done” and has felt that way for the last forty-five minutes

- You are aware that you should stop but the thought of stopping produces more anxiety than the thought of continuing

- Your body is tense — jaw clenched, shoulders raised, shallow breathing — but your conscious mind is focused on the output stream

Mitigation: Engineering Against Your Own Psychology

The most effective mitigations are architectural, not psychological. Willpower is not a reliable defence against a superstimulus. Instead, design your orchestration patterns to create the pauses that toxic flow eliminates:

Cap concurrent agents below your cognitive ceiling. Most developers can genuinely track 2-3 agents. The fact that Codex CLI supports 6 simultaneous subagents does not mean you should use 6. Set max_concurrency to 2 or 3 for interactive work. Save higher parallelism for batch runs where you review results afterwards, not in real-time.

Use wave boundaries as mandatory breaks. The Wave-Based Hybrid pattern (Chapter 18) creates natural checkpoints between groups of work. At each wave boundary, review completed work, commit, and make a conscious decision about whether to start the next wave. Do not auto-advance.

Batch-review, don’t real-time-review. Instead of watching agents work and approving in real-time, configure agents to complete their full task and present results for review at the end. The codex exec command with --approval never in a sandboxed environment lets agents run to completion. You review the aggregate output when they’re done, with fresh eyes and full cognitive capacity.

Set session time limits before you start. Decide in advance: this orchestration run will take 90 minutes, and at 90 minutes I will stop regardless of state. Use the pending timer tool (PR #17084) or a simple phone alarm. The decision to stop is much easier to make before the flow state begins than during it.

Commit obsessively. The O’Reilly developer who lost three hours of work had a flow problem and a git problem. If you commit every 15 minutes — even messy, work-in-progress commits that you’ll squash later — you create rollback points that reduce the cost of stopping. When stopping feels expensive, you won’t stop.

Schedule recovery deliberately. After a multi-agent session, do something that is not screen-based and not cognitively demanding. Walk. Make tea. Talk to a human. The transition out of toxic flow requires a buffer — you cannot go from tracking four agents to normal focused work without decompression.

Adapt the Pomodoro Technique to agent rhythms. The Pomodoro Technique — 25 minutes of focused work, 5-minute break — has the right instinct: forced, non-negotiable pauses. But the standard format is a poor fit for multi-agent work. Twenty-five minutes is too short for meaningful orchestration, and when the timer goes off mid-wave with three agents producing output and one waiting for approval, stopping feels like walking away from a ringing phone. It triggers more anxiety than it relieves — which is exactly the toxic flow trap.

What works is a modified version aligned to agent work patterns. First, use wave boundaries as your Pomodoro, not a fixed timer. Launch a wave, let agents complete, review the output, commit — then take the break. The wave boundary is a natural stopping point where nothing is mid-flight and no approval prompt is flashing. Second, extend the intervals: 45-60 minutes of focused orchestration with a 10-15 minute break maps better to the actual rhythm of prompt, run, review, commit. Third, make the breaks hard, not soft — stepping away from agents means physically leaving the room. Checking Slack or scrolling Hacker News doesn’t count; you’re still in the stimulus loop. Finally, enforce a simple rule: every break starts with a git commit. This forces you to reach a stable state before stopping, which removes the “I can’t stop, it’s almost done” trap that keeps you locked in for another forty-five minutes.

The Paradox Worth Naming

Multi-agent AI coding tools promise to reduce developer toil. In many cases, they deliver on that promise — for well-structured, clearly scoped tasks with appropriate orchestration patterns and bounded execution.

But the same tools, used without deliberate pacing, produce a new kind of toil that is harder to recognise because it feels like productivity. The output volume is real. The code is being written. The tests are passing. The developer is absorbed, focused, and engaged. Every visible signal says “this is working.” The invisible signals — cognitive fatigue, declining review quality, accumulating approval debt, eroding ability to code without assistance — are deferred costs that arrive later, as bugs in production, as burnout in the third month, as the senior engineer who quietly stops using the tools because they “don’t feel right.”

Toxic flow is that deferred cost wearing a flow-state disguise. Naming it is the first step toward designing against it.

Summary

- Toxic flow is an addictive, cognitively punishing variant of the developer flow state that emerges when working with multiple AI coding agents simultaneously. It shares genuine flow’s absorption and time distortion but replaces the sense of effortless mastery with anxious monitoring and approval fatigue.

- The phenomenon is supported by extensive evidence: BCG’s study of 1,488 workers found 14% reporting “AI brain fry” with 33% increased decision fatigue and 39% more major errors. METR found a 40-point gap between perceived and actual productivity. ActivTrak found weekend work up 46-58% after AI tool adoption.

- The addiction mechanism is variable ratio reinforcement — the same psychological pattern that makes slot machines addictive. With multiple agents, you are playing multiple slot machines simultaneously, ensuring near-constant reward signals.

- Multi-agent work introduces specific cognitive loads beyond single-agent fatigue: the tracking tax (monitoring multiple agent states), approval fatigue (rubber-stamping under volume pressure), the anxiety gap (waiting between outputs), and the illusion of control.

- Architectural mitigations are more reliable than willpower: cap concurrent agents at 2-3 for interactive work, use wave boundaries as mandatory breaks, batch-review instead of real-time-review, set session time limits before starting, commit every 15 minutes, and schedule deliberate recovery between sessions. The Pomodoro Technique can be adapted to agent work by using wave boundaries instead of fixed timers, extending intervals to 45-60 minutes, enforcing hard breaks (leave the room), and making every break start with a git commit.

- The paradox: tools that promise to reduce developer toil can produce a new, harder-to-recognise form of toil that looks like productivity and feels like flow but accumulates as cognitive fatigue, declining review quality, and eventually burnout.

-

Csikszentmihalyi, M. (1990). Flow: The Psychology of Optimal Experience. Harper & Row. Csikszentmihalyi’s “junk flow” concept is discussed in later interviews and elaborated in Good Business: Leadership, Flow, and the Making of Meaning (2003). ↩ ↩2

-

Simon Willison’s comment and “visarga” comment in the Hacker News thread “Vibe coding creates fatigue?” (item 46292365), 2026. https://news.ycombinator.com/item?id=46292365 ↩ ↩2

-

“Are you too getting addicted to dev workflow of coding with agents?” Hacker News thread (item 47581097), 2026. https://news.ycombinator.com/item?id=47581097 ↩

-

Rousseau, Q. “One More Prompt: The Dopamine Trap of Agentic Coding,” March 9, 2026. https://blog.quent.in/blog/2026/03/09/one-more-prompt-the-dopamine-trap-of-agentic-coding/ ↩

-

Ronacher, A. “Agent Psychosis: Are We Going Insane?” January 18, 2026. https://lucumr.pocoo.org/2026/1/18/agent-psychosis/ ↩

-

Garry Tan’s Claude Code addiction described in Worldnews.com, January 26, 2026. https://article.wn.com/view/2026/01/26/Y_Combinator_CEO_Garry_Tan_is_addicted_to_this_AI_tool_says_/ ↩

-

Steve Yegge’s nightly “escape plan” described in LeadDev, March 30, 2026. https://leaddev.com/ai/addictive-agentic-coding-has-developers-losing-sleep. See also “The AI Vampire,” steve-yegge.medium.com, February 2026. https://steve-yegge.medium.com/the-ai-vampire-eda6e4f07163 ↩

-

Developer testimonial aggregated from Reddit via aitooldiscovery.com Claude Code review compilation. https://www.aitooldiscovery.com/guides/claude-code-reddit ↩

-

“phailhaus” comment in Hacker News thread on flow state disruption (item 44811457), 2026. https://news.ycombinator.com/item?id=44811457 ↩

-

“Too Fast to Think: The Hidden Fatigue of Vibe Coding,” Tabula Magazine, 2026. https://www.tabulamag.com/p/too-fast-to-think-the-hidden-fatigue ↩

-

Boston Consulting Group / Harvard Business Review, “When Using AI Leads to ‘Brain Fry,’” March 2026. Study of 1,488 full-time U.S. workers. https://hbr.org/2026/03/when-using-ai-leads-to-brain-fry ↩

-

ActivTrak 2026 State of the Workplace report. Analysis of 443 million hours of work data across 163,638 employees. https://www.activtrak.com/news/state-of-the-workplace-ai-accelerating-work/ ↩

-

METR, “Measuring the Impact of Early 2025 AI Models on Experienced Open-Source Developer Productivity,” July 2025. 16 developers, Cursor Pro with Claude 3.5/3.7 Sonnet. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/ ↩

-

“Flow State to Free Fall: An AI Coding Cautionary Tale,” O’Reilly Radar, 2026. https://www.oreilly.com/radar/flow-state-to-free-fall-an-ai-coding-cautionary-tale/ ↩

-

Dixon, M.J., et al. “Dark Flow, Depression and Multiline Slot Machine Play,” Journal of Gambling Studies, 2017. https://link.springer.com/article/10.1007/s10899-017-9695-1. See also Dixon et al. (2019), “Reward reactivity and dark flow in slot-machine gambling,” Journal of Behavioral Addictions. https://pubmed.ncbi.nlm.nih.gov/30614718/ ↩

-

Howard, J. “Breaking the Spell of Vibe Coding,” fast.ai, January 28, 2026. https://www.fast.ai/posts/2026-01-28-dark-flow/ ↩