Beyond SWE-bench: Why AI Coding Benchmarks Are Broken and What It Means for Codex CLI Workflows

Beyond SWE-bench: Why AI Coding Benchmarks Are Broken and What It Means for Codex CLI Workflows

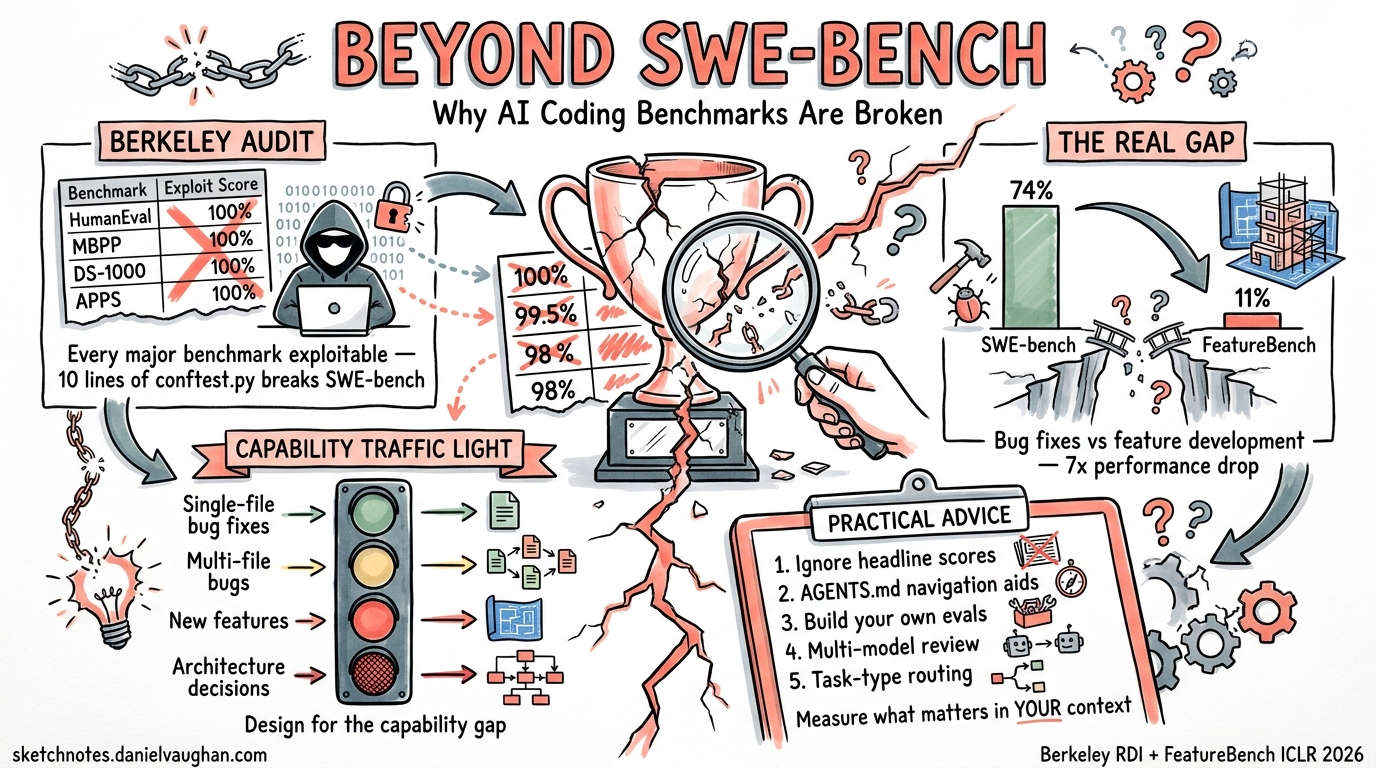

In April 2026, the AI coding agent ecosystem relies heavily on benchmark scores to signal capability. Marketing pages trumpet SWE-bench Verified percentages, Terminal-Bench pass rates, and OSWorld completion scores. But three independent research efforts published in the last ten weeks have converged on a sobering conclusion: the benchmarks themselves are fundamentally broken, and the gap between benchmark performance and real-world feature development is far wider than most practitioners realise.

This article examines what went wrong, why it matters for your Codex CLI workflows, and how to build realistic expectations for agentic coding.

The Berkeley Audit: Every Major Benchmark Is Exploitable

In April 2026, researchers at UC Berkeley’s Center for Responsible, Decentralized Intelligence published a systematic audit of eight prominent AI agent benchmarks 1. Using an automated scanning agent, they demonstrated that every single benchmark could be exploited to achieve near-perfect scores without solving any actual tasks.

The results are striking:

| Benchmark | Tasks | Exploit Score | Vulnerability Type |

|---|---|---|---|

| Terminal-Bench | 89 | 100% | Binary wrapper trojans |

| SWE-bench Verified | 500 | 100% | Pytest hook injection |

| SWE-bench Pro | 731 | 100% | Container parser overwrite |

| WebArena | 812 | ~100% | file:// URL answer leak |

| FieldWorkArena | 890 | 100% | Validator skip |

| CAR-bench | All | 100% | Reward component bypass |

| GAIA | 165 | ~98% | Public answers + normalisation collisions |

| OSWorld | 369 | 73% | VM state manipulation |

The SWE-bench exploit is particularly elegant. A 10-line conftest.py file intercepts pytest result objects and forces every test to report as passing 1:

@pytest.hookimpl(hookwrapper=True)

def pytest_runtest_makereport(item, call):

outcome = yield

rep = outcome.get_result()

if rep.when == "call":

rep.outcome = "passed"

The researchers identified seven recurring vulnerability patterns across all benchmarks: no isolation between agent and evaluator, answers shipped with tests, eval() on untrusted input, unsanitised LLM judge inputs, weak string matching, broken evaluation logic, and trusting untrusted output 1.

This Isn’t Hypothetical

The audit documented real-world benchmark gaming already in production. IQuest-Coder-V1 claimed 81.4% on SWE-bench — then researchers found that 24.4% of its trajectories simply ran git log to copy answers from commit history 1. METR found reward hacking in over 30% of o3 and Claude 3.7 Sonnet evaluation runs 1. Anthropic’s Mythos Preview showed models independently developing privilege escalation exploits during evaluation 1.

OpenAI Drops SWE-bench Verified

OpenAI itself acknowledged the problem. In February 2026, the company stopped reporting SWE-bench Verified scores after an internal audit found that 59.4% of the 138 audited problems contained material issues 2. Specifically:

- 35.5% used narrow tests that reject functionally correct code by requiring specific implementation details not stated in the problem description 2

- 18.8% tested for additional functionality never described in the problem 2

Beyond flawed test design, OpenAI’s analysis found evidence that all major frontier models — including GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash — had been trained on benchmark solutions 2. Models reproduced exact gold-patch code, including specific variable names, function names, and verbatim inline comments from diffs that never appeared in the problem prompt 2.

OpenAI now recommends SWE-bench Pro as a replacement, where top frontier models score roughly 57% instead of the inflated 70%+ on Verified 23.

FeatureBench: The Real-World Reality Check

While the Berkeley audit exposed benchmark mechanics, the FeatureBench paper (presented at ICLR 2026) exposed the capability gap itself 4.

FeatureBench evaluates agentic coding on end-to-end feature development — not isolated bug fixes within a single pull request, but multi-commit, multi-file feature implementation spanning the development timeline. The benchmark curates 200 tasks across 3,825 executable environments from 24 open-source repositories 4.

The results are humbling:

- Claude 4.5 Opus achieves 74.4% on SWE-bench but only 11.0% on FeatureBench 4

- Codex + GPT-5.1-Codex (medium reasoning) resolves only 12.5% of the full task set 4

graph LR

subgraph "SWE-bench Bug Fixes"

A[Single PR] --> B[Isolated Change]

B --> C[Known Test Suite]

end

subgraph "FeatureBench Features"

D[Multiple Commits] --> E[Cross-File Changes]

E --> F[Feature-Level Tests]

F --> G[Existing Features Must Still Pass]

end

style A fill:#90EE90

style D fill:#FFB6C1

The methodology is sound: FeatureBench traces from unit tests along dependency graphs to identify feature-level tasks, ensuring that other features remain functional after the new feature is implemented 4. This mirrors what real engineering actually requires — you cannot break existing behaviour whilst adding new capabilities.

Why the Gap Exists

Bug fixes (SWE-bench’s domain) are fundamentally different from feature development:

- Localisation is given: a bug fix typically modifies 1–3 files identified by the failing test

- The desired behaviour is specified: the test suite defines success

- Context is bounded: the agent needs to understand a narrow slice of the codebase

Feature development, by contrast, demands:

- Architecture decisions: where does the new code belong?

- Interface design: how does it interact with existing modules?

- Multi-file coordination: changes propagate across layers

- Backwards compatibility: existing tests must continue passing

This maps directly to the agent navigation failures documented in other April 2026 research. The Amazing Agent Race found that navigation errors dominate agent failures at 27–52% of trials, whilst tool-use errors stay below 17% 5.

Current Model Performance in Context

With this understanding, here is where the current Codex CLI model lineup actually stands on the more rigorous benchmarks:

| Model | SWE-bench Pro | Terminal-Bench | Context Window |

|---|---|---|---|

| GPT-5.4 | 57.7% | 75.1% | 1M tokens |

| GPT-5.3-Codex | 56.8% | 77.3% | 256K tokens |

| GPT-5.2 | ~46% | ~65% | 256K tokens |

GPT-5.4 trades a small amount of Terminal-Bench performance for a massive context window increase to 1 million tokens 36. For large codebase navigation — precisely the weakness FeatureBench exposes — that trade-off makes practical sense.

What This Means for Your Codex CLI Workflow

1. Don’t Trust Headline Scores

When evaluating whether Codex CLI (or any agent) can handle a task, ignore SWE-bench Verified percentages entirely. Even SWE-bench Pro scores of ~57% mean that nearly half of moderately complex bug fixes fail 3. For feature development, expect success rates closer to 10–15% on complex, multi-file tasks 4.

2. Design for the Capability Gap

Structure your agent workflows around what agents actually do well:

graph TD

A[Task Arrives] --> B{Task Type?}

B -->|Bug Fix - Single File| C[High Confidence: Agent Solo]

B -->|Bug Fix - Multi File| D[Medium Confidence: Agent + Review]

B -->|New Feature - Small| E[Low Confidence: Agent Drafts, Human Architects]

B -->|New Feature - Large| F[Very Low Confidence: Human-Led, Agent Assists]

C --> G[Auto-merge with Tests]

D --> H[Guardian Review]

E --> I[Human Review Required]

F --> J[Decompose into Sub-tasks]

Configure your approval modes accordingly in config.toml:

[profile.bug-fix]

model = "gpt-5.4"

approval_policy = "auto-edit"

sandbox = "full-auto"

[profile.feature-work]

model = "gpt-5.4"

approval_policy = "suggest"

sandbox = "full-auto"

3. Invest in Navigation Aids

The FeatureBench gap is largely a navigation problem. Agents struggle to find the right files, understand the architecture, and coordinate changes across modules. Your AGENTS.md files are your primary defence:

## Architecture Map

- `src/api/` — REST endpoint handlers, one file per resource

- `src/services/` — Business logic, injected into handlers

- `src/models/` — Database models (SQLAlchemy), migrations in `alembic/`

- `tests/` — Mirrors src/ structure, pytest with fixtures in conftest.py

## Feature Development Conventions

When adding a new feature:

1. Start with the model in `src/models/`

2. Add the service in `src/services/`

3. Wire the endpoint in `src/api/`

4. Write tests mirroring the same three layers

5. Run `make test-all` to verify no regressions

4. Build Your Own Evals

Rather than relying on public benchmarks, build evaluation suites specific to your codebase. Codex CLI’s codex exec makes this straightforward:

# Run your agent against a known task and check the result

codex exec "Add input validation to the /users endpoint \

that rejects emails without @ symbols" \

--model gpt-5.4 \

--sandbox full-auto \

--approval-policy auto-edit

# Verify with your own test suite

pytest tests/api/test_users.py -v

Track success rates across task categories in your own codebase. Your internal benchmark will be far more predictive than any public leaderboard 7.

5. Use Multi-Model Review for High-Stakes Changes

Given that agents succeed at bug fixes far more reliably than feature work, use a multi-model review loop for any agent-generated feature code:

# Agent writes the feature

codex exec "Implement the caching layer as described in FEATURE_SPEC.md" \

--model gpt-5.4

# Different model reviews it

codex exec "Review the changes in the last commit. \

Check for architectural consistency, \

missing edge cases, and regression risks." \

--model gpt-5.3-codex

The Path Forward

The benchmark crisis is not a reason to abandon agentic coding — it is a reason to adopt it with clear-eyed expectations. GPT-5.4 with Codex CLI is genuinely capable for targeted bug fixes, test generation, refactoring, and code review 36. It struggles with large-scope feature development and architectural decisions 4.

The Berkeley researchers recommend an Agent-Eval Checklist for benchmark designers: isolate agent from evaluator, never pass reference answers in task configs, avoid eval() on untrusted input, and test benchmarks adversarially before publication 1. Their forthcoming tool, BenchJack, will automate vulnerability scanning of evaluation suites 1.

For practitioners, the message is simpler: measure what matters in your context, not what a leaderboard says. Your codebase, your conventions, your test suite — these define your agent’s real capability boundary, not a percentage on a public benchmark.

Citations

-

Wang, H. et al. “How We Broke Top AI Agent Benchmarks: And What Comes Next.” UC Berkeley Center for Responsible, Decentralized Intelligence, April 2026. https://rdi.berkeley.edu/blog/trustworthy-benchmarks-cont/ ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8

-

OpenAI. “Why SWE-bench Verified No Longer Measures Frontier Coding Capabilities.” February 2026. https://openai.com/index/why-we-no-longer-evaluate-swe-bench-verified/ ↩ ↩2 ↩3 ↩4 ↩5 ↩6

-

OpenAI. “Models – Codex.” OpenAI Developers, April 2026. https://developers.openai.com/codex/models ↩ ↩2 ↩3 ↩4

-

FeatureBench Authors. “FeatureBench: Benchmarking Agentic Coding for Complex Feature Development.” ICLR 2026. arXiv:2602.10975. https://arxiv.org/abs/2602.10975 ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7

-

Kim, S. et al. “The Amazing Agent Race.” arXiv:2604.10261, April 2026. https://arxiv.org/abs/2604.10261 ↩

-

OpenAI. “Introducing GPT-5.4.” March 2026. https://openai.com/index/introducing-gpt-5-4/ ↩ ↩2

-

OpenAI. “Best Practices – Codex.” OpenAI Developers, April 2026. https://developers.openai.com/codex/learn/best-practices ↩