Remote HTTP MCP: Codex CLI Completes the Enterprise Tool Services Stack

Remote HTTP MCP: Codex CLI Completes the Enterprise Tool Services Stack

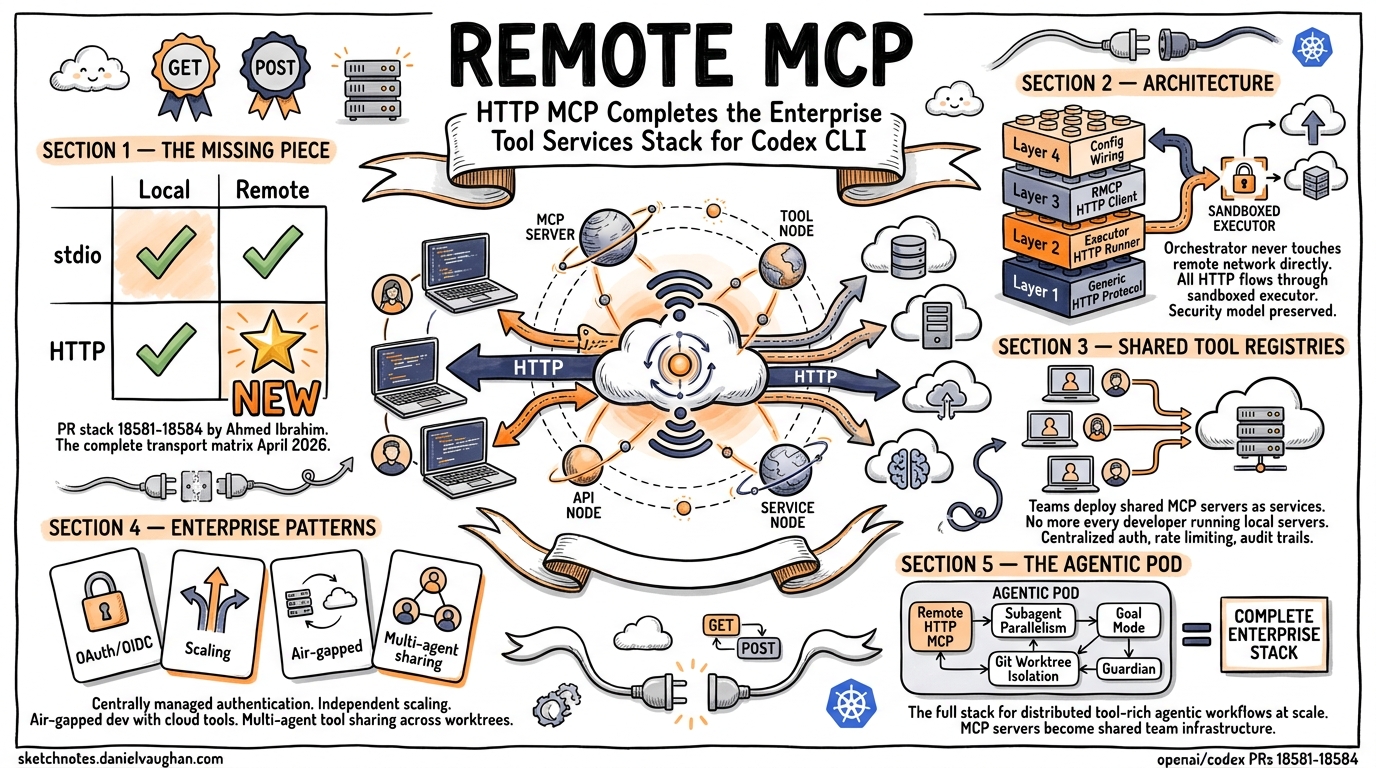

On April 19, 2026, OpenAI engineer Ahmed Ibrahim (@aibrahim-oai) opened a 4-part PR stack (#18581–#18584) that completes remote streamable HTTP MCP support in Codex CLI. This quietly unlocks one of the most significant enterprise capabilities: MCP servers as cloud-native microservices.

Why This Matters

Until now, Codex CLI’s MCP support has been strongest for local stdio transports — MCP servers running as child processes on the developer’s machine. Remote MCP existed for stdio (wired in PR #18212), but HTTP transport was local-only.

The new PR stack adds the missing piece: remote HTTP MCP, where MCP servers run on separate infrastructure (cloud VPCs, Kubernetes clusters, corporate networks) and are accessed over HTTP from within the executor sandbox.

The Architecture

The implementation is elegant in its separation of concerns:

- Generic HTTP protocol (#18581) — typed request/response payloads with streaming body support, deliberately MCP-agnostic

- Executor HTTP runner (#18582) — server-side handler that performs actual network I/O inside the sandbox

- RMCP HTTP client (#18583) — wraps the executor as a standard RMCP-compatible transport

- Wiring (#18584) — routes configs with

experimental_environment = "remote"through the new path

The orchestrator never touches the remote network directly. All HTTP traffic flows through the sandboxed executor, maintaining Codex’s security model.

Enterprise Patterns This Enables

Shared Team Tool Registries

Instead of every developer running their own MCP servers locally, teams can deploy shared MCP servers as services:

# .codex/config.toml

[mcp.servers.team-jira]

type = "streamable-http"

url = "https://mcp-tools.internal.corp/jira"

experimental_environment = "remote"

[mcp.servers.team-confluence]

type = "streamable-http"

url = "https://mcp-tools.internal.corp/confluence"

experimental_environment = "remote"

Centrally Managed Auth & Monitoring

Remote MCP servers can have their own:

- OAuth/OIDC authentication flows

- Rate limiting and quota management

- Centralized logging and audit trails

- Independent scaling based on team demand

Multi-Agent Tool Sharing

In agentic pod architectures, multiple agents (running in parallel worktrees) can share the same remote MCP server instances, avoiding the overhead of spawning duplicate local tool processes.

Air-Gapped Development with Cloud Tools

Developers in restricted networks can connect to pre-approved MCP servers in controlled cloud environments, enabling tool access without local network exposure.

Transport Matrix (Complete as of April 2026)

| Transport | Local | Remote |

|---|---|---|

| stdio | ✅ (original) | ✅ (PR #18212) |

| HTTP | ✅ (0.119.0+) | ✅ (PRs #18581–#18584) |

What’s Next

These PRs are currently open. If merged into 0.122.0, expect:

- Documentation updates on

experimental_environment = "remote"for HTTP configs - Potential promotion from

experimental_prefix in a future release - Community MCP server hosting solutions (similar to plugin marketplace)

Implications for the Agentic Pod

This is a foundational piece for enterprise multi-agent architectures. When you combine remote HTTP MCP with:

- Subagent parallelism (custom agents in TOML)

- Git worktree isolation (parallel development)

- Goal mode (autonomous continuation with token budgets)

- Guardian/auto-review (oversight agents)

…you get a complete stack for running distributed, tool-rich agentic workflows at scale. The MCP servers become your team’s shared tool infrastructure, and agents become consumers of those tools regardless of where they run.

Source: openai/codex PRs #18581–#18584 by @aibrahim-oai, April 19, 2026